i.MX95

i.MX 95应用处理器系列:支持功能安全的高性能平台,带eIQ® Neutron NPU

直接在边缘侧部署先进的视觉、语言和多模态生成式AI,无需依赖云端。本演示展示了恩智浦如何将i.MX MPU与可扩展的Ara240 DNPU相结合,从而支持从实时视觉推理到大规模多模态生成式AI模型的广泛任务——在工业与汽车应用中实现高性能、低延迟且保护隐私的智能处理。

i.MX 95应用处理器系列:支持功能安全的高性能平台,带eIQ® Neutron NPU

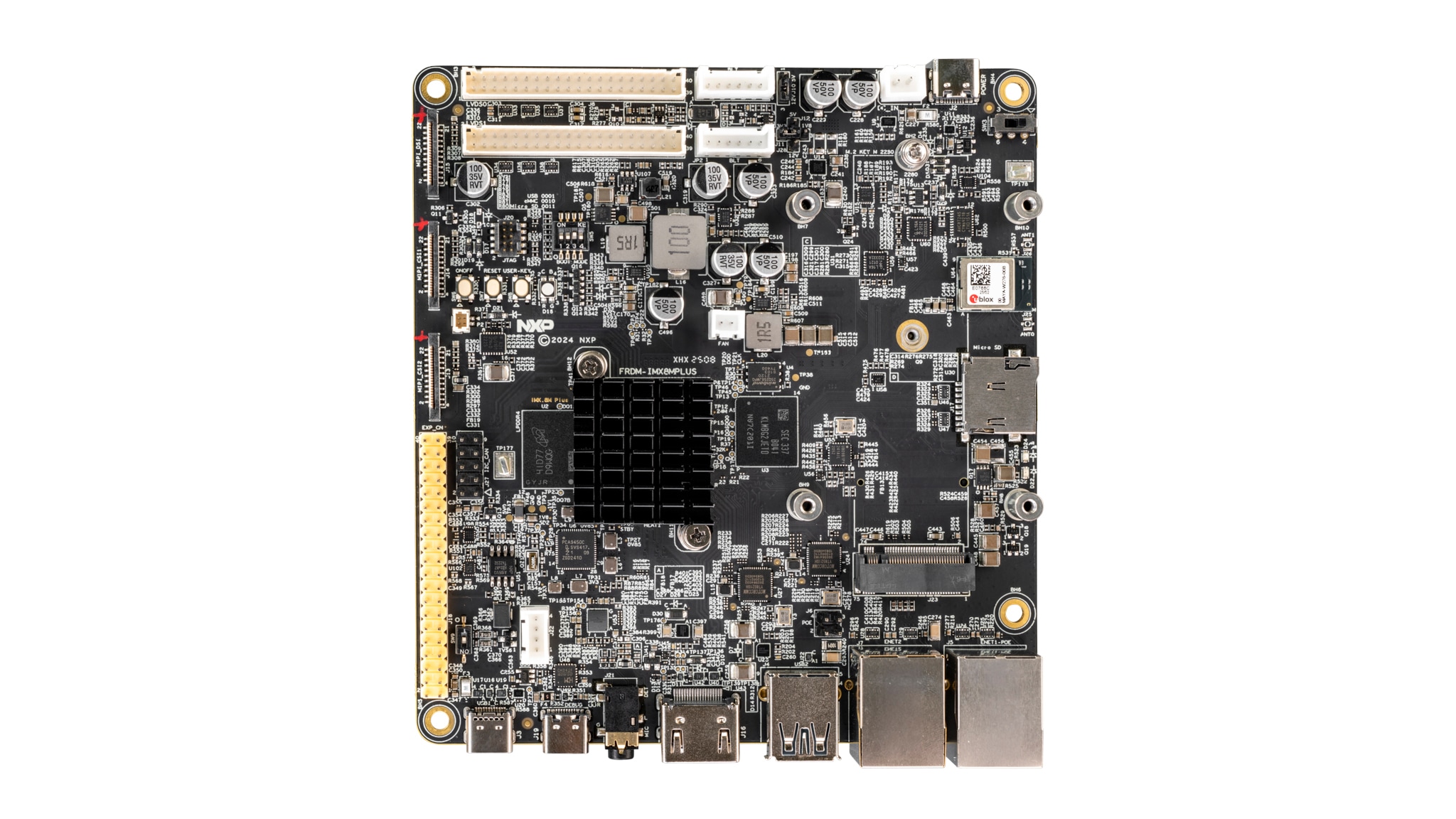

i.MX 8M Plus——Arm® Cortex®-A53,适用于机器学习、视觉、多媒体和工业物联网

Ara240|独立式神经处理单元(DNPU)

FRDM i.MX 8M Plus开发板

通过将高性能i.MX 95、通用型i.MX 8M Plus及强大的Ara240 DNPU相结合,恩智浦打造出一个灵活的边缘AI平台。该平台可从高效的视觉推理扩展至大规模多模态及生成式AI工作负载,从而在工业、物联网和汽车应用中实现高性能、低延迟且 保护隐私的智能处理。

本演示展示了视觉、语言与生成式AI模型如何在设备端协同工作,在无需依赖云端的情况下,提供实时分析、低延迟及保护隐私的智能。

本演示通过在设备端完全运行具有320亿参数的LLM,展示了如何将敏感数据保留在本地,并在工业自动化、机器人、物流、医疗保健和智能基础设施等领域实现安全可信的生成式AI,避免云端暴露或数据泄露风险。

Whether you have questions or want to explore more, we're here to help. Reach out to our team or create your account to access exclusive resources and updates.