作者

恩智浦客户成功案例

我们的创新和技术专长助力企业开发突破性的解决方案,实现更智能、更安全、更可持续发展的世界。我们分享客户的成功故事,着重介绍创造新一代突破性技术的人才。

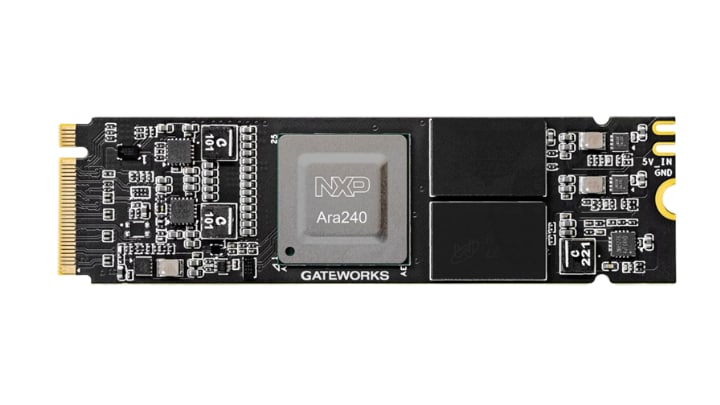

恩智浦金牌合作伙伴Gateworks,是边缘AI及工业互联领域嵌入式计算解决方案领军企业。凭借数十年的丰富经验以及在恩智浦生态系统内的深度整合,Gateworks提供美国本土制造的工业级平台,并辅以AI加速解决方案,如GW16168 M.2 AI加速卡。

在计算机视觉、机器人与工业物联网等领域,工程师对强大且高效的解决方案的需求日益迫切,推动边缘AI处理需求快速增长。为此,Gateworks携手恩智浦推出了GW16168 M.2 AI加速卡 ,这是一款专为将高性能工业级AI处理能力直接集成到嵌入式边缘平台而打造的解决方案。

借助面向实际AI部署的平台,加速您的设计进程。了解有关GW16168 M2 AI加速卡的详情。

对于许多嵌入式系统而言,如何在保持整体系统性能、能效和开发进度的同时,支持现代AI工作负载,是一大挑战。这导致工程师不得不在计算能力与系统复杂性之间进行权衡。常见挑战包括:

这些制约因素不仅拖慢了创新节奏,也推高了大規模部署AI的总体成本。

GW16168 采用恩智浦Ara240独立神经处理单元(DNPU),将AI处理与主机CPU分离开来。这种架构可提供高达40 eTOPS的专用推理性能,使复杂的AI工作负载能够独立运行,而不会影响系统的响应能力。

该解决方案的优势包括:

随着AI持续向边缘迁移,开发人员需要的解决方案不仅要性能强大,还需具备可扩展、可靠以及易于集成的特点。GW16168将恩智浦先进的AI处理技术与Gateworks的工业设计、美国本土制造及长期支持相结合,为在边缘部署AI提供了一套完整的解决方案。

我们的创新和技术专长助力企业开发突破性的解决方案,实现更智能、更安全、更可持续发展的世界。我们分享客户的成功故事,着重介绍创造新一代突破性技术的人才。